some films you can watch here: http://www.ubu.com/film/ballard.html

A Voice To Keep You Sane While Exploring The Depths Of Space

You might remember AgNES, the cute little robot head with one eye that would turn to follow bright colours, modelled as a sort of younger (or older?) sibling to Portal’s GLaDOS. Well, AgNES is evolving and growing – this time, AgNES even has a brain. Not a particularly smart brain, but it’s a start.

There are two broad lines of thought informing the design of AgNES. One is the concept of future archaelogy, or how past and present technologies will appear to researchers hundreds or thousands of years from now – how protocols, interfaces, design conventions, and so on, will be accessible to people who will want to study our present society. In many cases, these systems will quite likely have to be reverse engineered to even make them functional, and then the work of trying to understand the social and cultural role they played may be pretty close to a guessing game. The other big line of thought is human deep-space exploration, or how we will adapt to sending people on really long deep space voyages, sometimes on their own and sometimes with other people, trying to make sure they don’t go crazy in the process. AgNES is a little bit of both: it’s a companion robot designed to accompany deep space explorers, but it’s also one that’s probably been derelict for a while and found by some other crew hundreds of years later, without major explanation or indication as to what happened. That also makes AgNES the only mechanism through which one can reconstruct the story, and a little bit of a mystery game to try to understand what happened under its watch.

So there’s a lot going on here, and many things to unpack – most of them driven by this underlying narrative scenario, which then in turn motivates the design and informs many of its decisions. As a companion robot, the voice of AgNES is intended to keep company and provide the crew with some persistent grounding to the real world, by providing them with information or simply entertainment. AgNES can spew out facts and teach about things, but it can also tell stories or read poems. And most of this content is constantly changing, some of it even being randomly generated every time. In a way, it can be interpreted as a science fiction reinterpretation of the tale of One Thousand and One Nights, where Scheherazade earned herself one more day to live every night by telling a new story. Similarly, AgNES is able to keep explorers engaged by providing one more piece of content at a time.

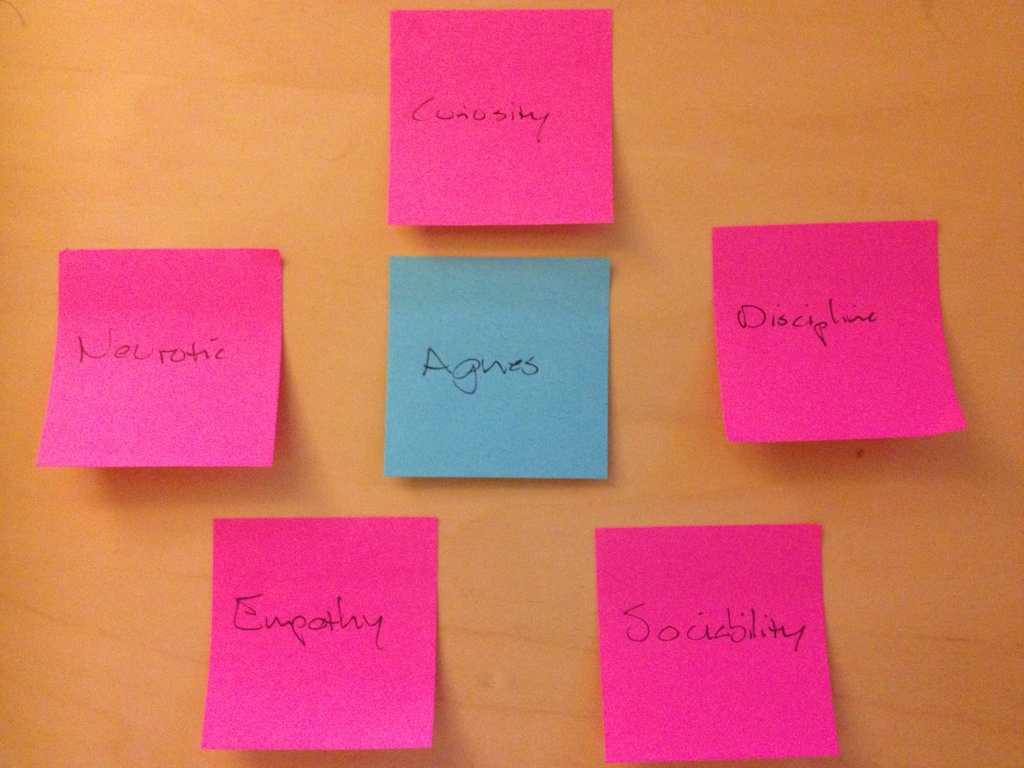

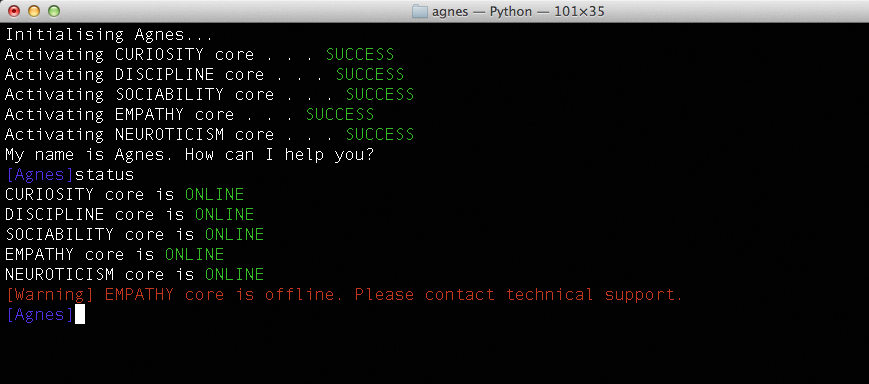

But where AgNES’s design gets really interesting is in her personality. The design of AgNES’s brain is modeled on the “Five Factor Model” (FFM) of personality, according to which personality can be described as operating across five different domains: openness, conscientiousness, extraversion, agreeableness, and neuroticism, with different personally being various strong or weak on any of these domains. AgNES’s personality is similarly modelled on the Five Factor Model, albeit with a few of the trait names modified slightly. Her personality is composed of five independent “cores”, each representing one trait, and each being activated or deactivated independently. What personality cores are active at any given time affects AgNES’s behaviour roughly following the FFM description: if the openness – renamed as “curiosity” – core is turned off, for example, AgNES’s language becomes simpler, as do the stories she will tell. If the conscientiousness – renamed “discipline” – core is offline, she might be willing to comply with some commands that would otherwise be blocked for the user. In this way, you can experience several different versions of AgNES by experimenting with personality core configurations, where each of them might yield different information about herself, her purpose, her design, her creators, or her history.

That’s where the interactive storytelling component comes in. Based on the present configuration, AgNES will give you some information. Under a different configuration, you might be able to explore that information further, or you might get different, even contradictory, information. Since there’s no documentation or knowledge about the context available, there’s really no way to tell – so as the hypothetical future researcher your playing, you can only make your best conjecture as to what’s going on.

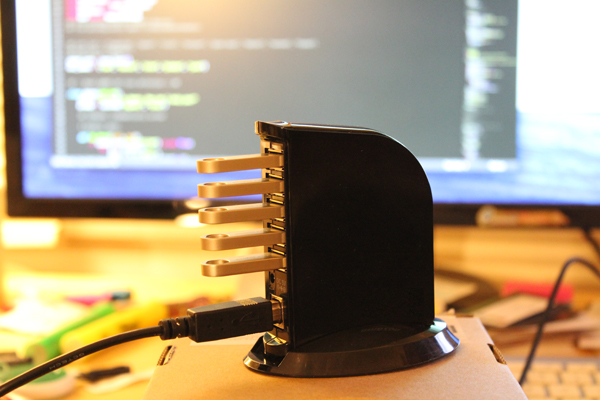

In terms of implementation, AgNES’s brain operates really as just a switch. The brain itself is a standard USB hub, and the personality cores are standard USB flash drives with renamed volume labels to tell them apart. The updated code for AgNES – as always, available on GitHub if anyone’s interested – checks for which cores are plugged in after every command is executed and updates accordingly. Some commands will simply be nonresponsive unless the right configuration is set, while other will exhibit different behaviour and information. The way many of the commands are set up is so that they will always give the user new information in randomised fashion: for instance, the til command (or “today I learnt”) will randomly fetch and read out the summary to a random Wikipedia article, assuming all cores are in place (if the Curiosity core is off then the command does the same, except pulling from the Simple English Wikipedia). Other commands pull information from other sources around the web, especially randomised text generators: haikus, Post Modern randomly generated articles, FML tweets, and so on. The code pulls a source, parses the webpage, extracts the desired bit of text and then passes it over to the Mac’s built-in text-to-speech synthesizer to read out loud.

The result is often funny, and often awkward. It’s when commands break or fail to work as expected that crucial information about AgNES is revealed – when it forgets who it’s taking too and calls you by one of her developer’s names, or when it inadvertently reveals how to get access to some crucial piece of information. Working on this breadcumb trail is what’s probably going to be the highest priority item for the next and final iteration, so that the AgNES simulation is actually “playable.” Additionally, I also need to improve how rules are manages in determining what operation should be based on the active cores, and I need to clean up the interface further still – as well as integrating this iteration with the previous one, to actually have a moving, animated object to correlated with the synthesized voice you get from the computer.

I’m already getting really good feedback to consider for the final iteration – so any comments or suggestions are more than welcome! Also, I’ll try to get a video up soon to show how it actually operates, as it’s a little difficult to reproduce independently right now.

Why SciFi Gets The Blues.

http://99percentinvisible.org/episode/future-screens-are-mostly-blue/

What they don’t mention but supports their argument is that the material science to create blue phosphors wasn’t economically feasible until just before 2000. It could be done before that, but it was expensive. So, yes, blue lasers, blue LEDs, in essence the color blue itself, used optically WAS indeed futuristic. The second thing they don’t bring up is the actual physiology of the eye. The human eye sees blue the LEAST. Or one could say the least sharply. That’s why compression algorithms can throw away much of the blue channel and it’s not missed. 4:2:2 anyone? (CORRECTED via Ben’s reply. See Notes.) Half the blue is gone. When designing and animating Science Fiction interfaces you can use blue to keep the action on the actors and not let what is on the display steal focus. This also allows you to put all sorts of abstract, “frou-frou” widgets (the technical term is “nurnies” or “greebles”) that aren’t actually going to be seen in detail. They’re there and the audience senses them but the eye can’t focus on them to see what they actually are.

LawyeR: Exploring the future of legal work with machine learning and comics

“2H2K: LawyeR” is an in-progress project exploring the the future of electronic document discovery and machine learning on the practice of the legal profession. I’m pursuing that topic by prototyping an interactive machine learning interface for document discovery and writing and illustrating a comic telling the story of a sysadmin in a 2050 law firm. This work is a part of a collaborative project I’m pursuing with John Powers, 2H2K, which imagines life in the second half of the 21st century.

Discovery is the legal process of finding and handing over documents in response to a subpoena received in the course of a lawsuit. Currently, discovery is one of the most labor-intensive parts of the work of large law firms. It employs thousands of well-paid lawyers and paralegals. However, the nature of the work makes it especially amenable to recent advances in machine learning. Due to the secretive and competitive nature of the field, much of the work has gone unpublished. In this project, I’m working to create a prototype interactive machine learning system that would enable a lawyer or paralegal to do the work of discovery much more efficiently and effectively than is currently possible. Further, I’m trying to imagine the cultural consequences of the displacement of a large portion of well-paid highly-skilled legal labor in favor of automated systems. What happens when a large portion of the white collar jobs in large corporate legal firms are eliminated through automation? What does a law firm look like in that world? What does the law look like?

For this first stage of the project, I’ve been working with the Enron email dataset to develop a classifier that can detect emails that are relevant to a legal case on insider trading. In the course of developing that classifier, I had to read and label 1028 emails from and to Martin Cuilla, a trader on Enron’s Western Canada desk. While this process might seem dry and technical, in practice it threw me into the midst of the personal details of Cuilla’s life, ranging from his management of the Enron fantasy football league to the planning of his wedding to his heavy gambling to his problems with alcohol and contact with recruiters in the later period of Enron’s decline. This experience is already common to lawyers and paralegals who are immersed in previously personal documents in the course of doing discovery on a case. The introduction of this interactive machine learning system would transform the shared soap opera experience of a large team of lawyers into the personal voyeurism of the individual distributed users of the system.

In addition to this technical work, I’m also writing and drawing a comic telling the story of an individual working in a law firm in the year 2050. The comic is in the early stages, but I included rough versions of the first two pages in the presentation.

MIT and the First Wearable Computer

Yes, the first wearable computer was made here at MIT and was designed to cheat at a casino.

http://www.cs.virginia.edu/~evans/thorp.pdf

Could The Purple Wage Become a Thing of Reality?

2019- A future imagined by Syd Mead

Your new Nexus-6 Replicant Is Packaged and Ready to Go.

Slapsticks

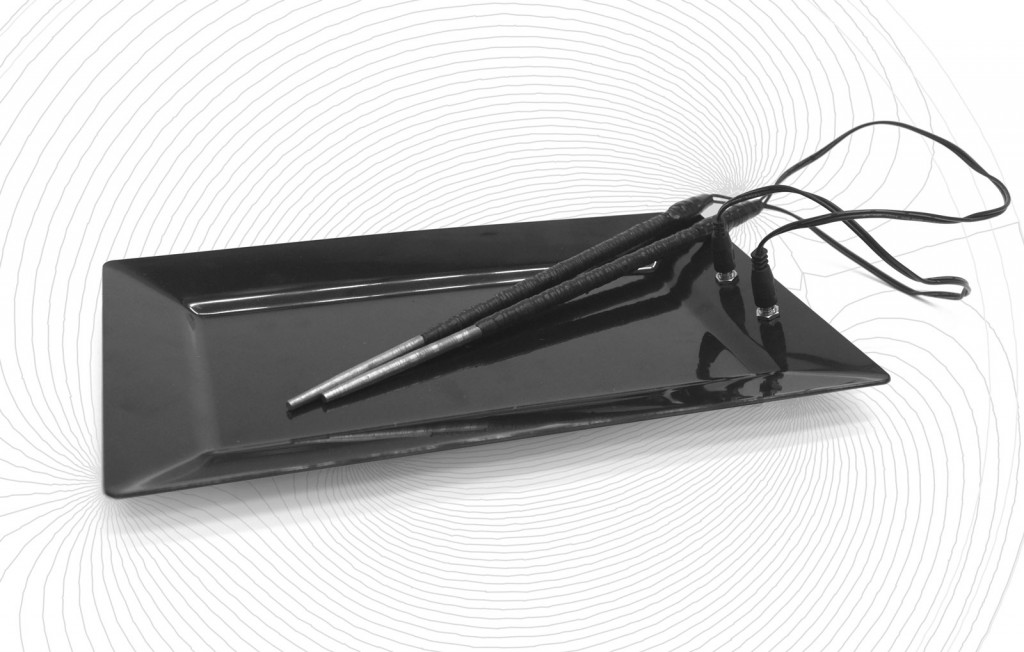

Slapsticks are a set of chopsticks controlling what and how you eat. Built from stainless, low-carbon steel wrapped in copper-wire Slapsticks’ individual magneticism is controlled by a computersystem supervising the trenchermans eating-order.

Inspired by the mediatronic chopstick showcased in the novel “The Diamond Age” by Neal Stephenson, Slapsticks monitor mainly how fast you are eating – but also what you are eating. Whenever your eating behaviour departs from the computationally optimised diet-plan (COD), Slapsticks enforce a better nutrition by attracting or repelling each other. Is the trencherman still defying the COD, Slapsticks will rise their temperature until unuseable.

Good eating behaviour and food selection is rewarded by supporting the eater magnetically.

Slapsticks are built from low-carbon steel with isolated copper-wire wrapped around it. This setup produces a magnetic field when electric current is applied (Electromagnetism). As with most electromagnets, the magnetic field disappears when the current is turned off. The rear part of the Slapsticks are coated in rubber to ensure a secure grib and savely isolate the copper-wire from the eaters hand.